Steve Levinson

Steve Levinson, Vice President & Chief Security Officer (CISO), of OBS Global’s Cybersecurity practice leads a vibrant, pragmatic, risk-based, business-minded security consulting practice that focuses on right-sized security. As the Vice President of OBS Cybersecurity, Steve leads a vibrant, pragmatic, risk-based, business-minded security consulting practice that focuses on right-sized security. This includes a wide variety of services including advisory services, governance/program management and risk assessments (PCI, HIPAA, ISO, NIST, FedRAMP and preparation for SOC2) technical security services (vulnerability scanning, penetration testing, red teaming, and secure code development), data protection and privacy, cloud security, and specialized security services for the healthcare and financial industries. Steve is considered a thought leader in the cybersecurity community, delivering captivating presentations and webinars, and having penned dozens of insights for many publications. Steve is an active CISSP, CISA, and QSA with an MBA from Emory Business School and has over twenty years of IT security experience, and over 25 years of IT experience. Steve’s strong technical and client management skills combined with his holistic approach to risk management resonates with clients and employees alike. He has performed or participated in hundreds of risk assessments and compliance assessments, starting his consulting career with Verisign and AT&T Consulting, where he provided cybersecurity consulting leadership. Since then, Steve has served as a key strategic advisor for hundreds of clients and has gained the trust of many industry partners and affiliates, earning him a seat as a respected voice around the PCI SCC’s Global Assessors Round Table. In addition to serving as virtual CISO for several clients, Steve has also performed security architecture reviews, network and systems reviews, security policy development, vulnerability assessments, and served as cybersecurity subject matter expert to client and partner stakeholders globally. Wherever Steve’s travels take him – and he travels a lot – he makes friends and finds time in his busy calendar to gather as many local like-minded security professionals, colleagues old and new, to share ideas, foster connections, and build on ideas. His true professionalism and his earnest nature, together, make up the ‘magic’ that fuels the passion of those he leads. It was exactly this combination of Steve’s vision, passion, and his connections around the world that recently helped form OBS’s EMEA division, expanding the organization’s security and digital transformation footprint internationally. Keeping up with the latest security trends and threats is easier than keeping up with Steve; when he’s not connecting with clients or fighting cybercrime, Steve is making meaningful memories with his family, keeping pace with his beloved pups, catching the early surf just after sunrise, or charging down a mountain slope. “Where’s Steve?” is a common phrase jested amongst colleagues around the virtual OBS office. But not to worry, if you miss him, he will circle back again soon.

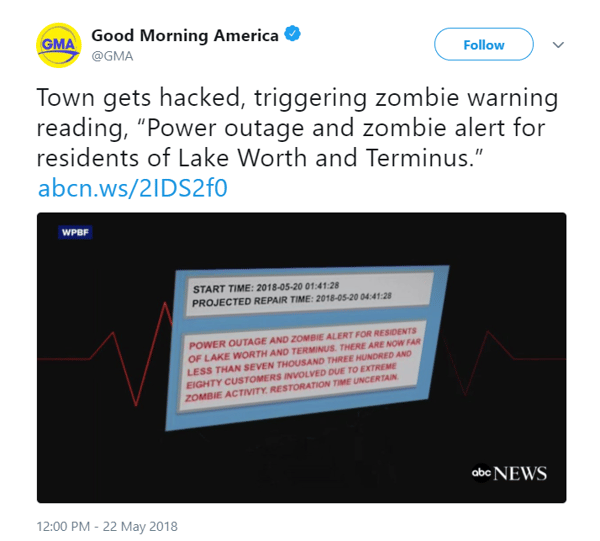

By now, most of the world has heard about the alarm pertaining to a zombie alert in Lake Worth, Florida.

Do we think that zombies were getting their day in the sun, or could it possibly be that whoever was responsible for writing the power alert application (or for testing it) was in some sort of zombie state at the time?

The headline looked a bit like this:

I’m happy to report that there were no casualties and to the best of my knowledge, all residents have emerged from this kerfuffle with their brains intact, because as you may have guessed… this was a false alarm. While on the surface, this hack was able to give us mere mortals a good chuckle, it is a good reminder that we should be diligent in ensuring our applications are reasonably secure, as the consequences of this poor coding could have been much more significant and harmful.

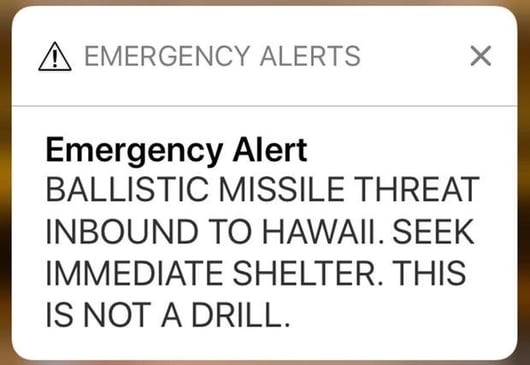

In a related non-incident from a couple of months ago, residents of Hawaii received this alert:

While this wasn’t necessarily a ‘programming faux pas’, it certainly was induced by human error. Basically, the ‘wrong button was pushed during a shift change at the Hawaiʻi Emergency Management Agency.

Both of these events bring home the point that oftentimes the issue is caused by carbon (people) and not silicon (computers). We humans will always make mistakes – it’s just our nature.

So it’s important to adhere to a few basic thought processes to minimize the risk associated with these mistakes:

- Depending on how critical the asset/system/whatever is, you should apply the right amount of testing/due care in helping ensure that it was ‘done right. In the zombie case, perhaps it would have made sense to review the code, perform a penetration test on the application, and/or monitor the application for any anomalous changes. You can learn more about these services here.

- Do you have a process in place to include the right amount of security in your applications? Do your developers have a good understanding of what it takes to write secure applications, such as OWASP?

- Do you have the capability to build in controls that make us verify actions? For example, perhaps there could have been the equivalent of an “are you sure” button during the missile non-crisis in Hawai’i.

- Just because something seems anomalous, is it really true? We should not fall victim to blindly believing alerts – we should trust but verify with a second source, or perhaps in today’s world, we should verify before trusting. In fact, with all of the discussions around "fake" and "real" news, we owe it to ourselves to check on the trustworthiness of the news – not only the source but how the information came to be in the first place.

Closing remarks:

It is everyday events like these, strange as they may seem, that should help remind us that we need to pay attention to what is oftentimes the weakest link – the thing between the chair and the computer.

Have you heard any similar security stories to these? I'd love to hear them! Feel free to leave a comment below.

To find out how Online's Risk, Security and Privacy practice can help prevent issues like those mentioned above, check out our website.

Submit a Comment